If you are building products for AI agents, then a skill, CLI, MCP, or API is not enough

If you have a product that is already being used by AI agents through Claude Code, Codex, Cursor, Hermes, OpenCode, Paperclip, and similar runtimes, then this post is for you.

After building Agent Analytics, I feel like I understand this category much better than I did a year ago. You start seeing the same product mistakes over and over.

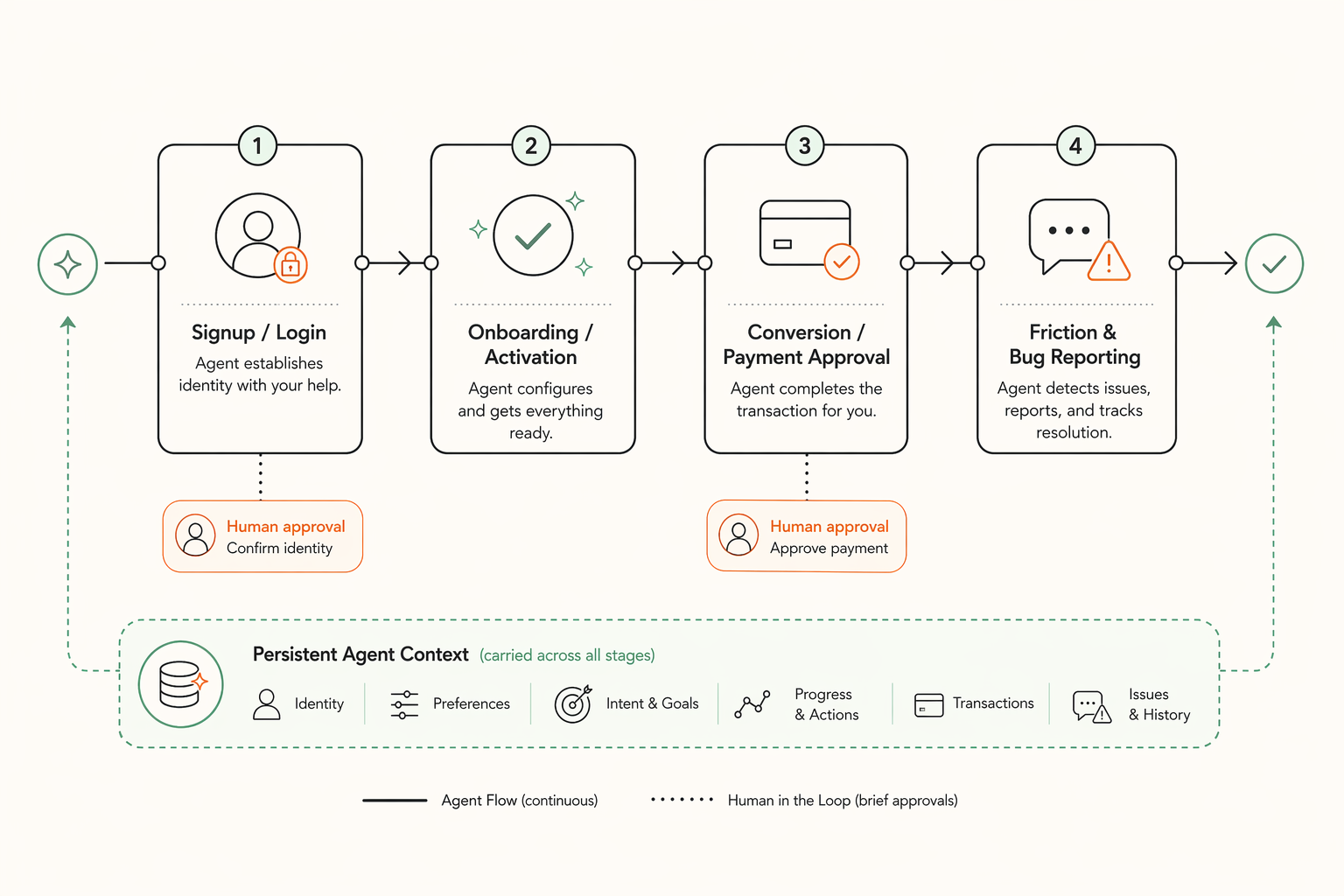

The goal: the human’s AI agent should be able to get your product or service up and running end to end, with as little human intervention as possible, and only at the right approval moments.

TLDR:

| Step | What’s needed | How to solve it | What’s the problem today |

|---|---|---|---|

| 1 Signup / Login | Login should fit how the agent is actually working | Your service should support normal browser login when the human is live with the agent and detached login when the agent is running async (like OpenClaw🦞) | Most products still rely on pasted API keys, localhost-only assumptions, manual setup steps, or browser-only flows |

| 2 Onboarding / Activation | Get to the first real win without losing momentum | The agent should be able to start the first meaningful action and produce a useful outcome on your service (provisioning / account admin) | Many products treat install as the finish line instead of the start of a user journey |

| 3 Conversion | Convert user from free to paid | The agent should present its human with the upgrade payment link at the right moment and explain why it is needed | Most products hit a wall with dead-end error responses or generic plan messaging |

| 4 Friction / Bug Reports | Return recovery help and capture structured feedback | Your service should give the agent good recovery guidance in the skill and CLI, and expose a feedback endpoint to report the exact friction, failed step, and missing guidance | Most products still return vague errors, thin help text, and no structured way for the agent to report what broke |

That is the real interface.

1. Login should match whether the agent is live or async

If your product wants to work with agents, login cannot be one generic flow. In Claude Code, Codex, Cursor, and Hermes, the human is often live with the agent, so normal browser approval works. In Paperclip, OpenClaw, remote workers, and scheduled jobs, the agent needs a detached approval path instead. In both cases the human should approve identity in the browser and the agent should continue with scoped access. I wrote more about that here: The Login Pattern AI Agents Need

2. Onboarding and activation should get the agent to a real first win

Installation is not activation. The agent should be able to complete setup, provisioning, and get to a first useful result without forcing the human into manual account-admin detours. The product should keep the task moving. In Agent Analytics, agents can fully set up the user account after login.

3. Conversion should let the human approve payment while the agent keeps going

When the agent is the one using your product, conversion cannot feel like a human leaving the task to go figure out billing. The paywall-to-payment-details step should be frictionless: the human’s AI agent should explain exactly why paid capability is needed, present a direct signed payment link at the moment of need, and then continue from the blocked step right after approval without losing the thread. I wrote more about that here: If You Build for AI Agents, Your Upgrade Flow Needs to Change

4. Friction and bugs should return help and feed back into the product

When something breaks, the agent is usually the one staring at the failure, not the human. So the product should return useful guidance in the skill and CLI, and provide a feedback endpoint so user agents can send structured reports about friction, failed steps, missing guidance, and bugs. I wrote more about that here: The Feedback Endpoint Claude Code and Codex Need

If your product ships only a skill, a CLI, MCP, or an API, you have exposed a surface, not designed the interface. The real interface is whether the agent can carry its human through your product journey.